NLP Explained: Why Your Phone Thinks You're Swearing

Estimated read time... 7 - 8 minutes

Updated 16 Mar 2026

NLP is the layer that sits between you and every AI tool you use.

It takes your messy, sarcastic, typo-filled sentences and turns them into something the AI can actually work with, by breaking your words into pieces, mapping their meaning, and focusing on the bits that matter most.

In 2026 it powers everything from autocorrect to voice assistants to spam filters, and the law now requires AI-generated content to be clearly labelled, which means NLP is no longer just a tech detail. It is a business consideration.

NLP runs autocorrect, spam filters, chatbots and sentiment analysis.

Reading intent in text at scale.

It nails patterns but trips on sarcasm, culture and bias from training data.

For your business: Use it for drafts, customer insights and 24/7 service. Human oversight for tone. Start small. No tech team needed.

Your phone just auto-corrected "I'll be there soon" to "I'll bee their spoon."

Your smart speaker thought you asked about duck recipes when you said something entirely different. If you are new to this series, our plain-English guide to what AI actually is covers the full picture before we go any deeper here.

These are not random failures. They are a window into one of the most genuinely difficult problems in computer science: teaching a machine to understand how humans actually talk.

Welcome to Natural Language Processing, or NLP. The field that sits between you and every AI tool you use, quietly trying to make sense of everything you throw at it.

In Blog 1: Machine Learning for Beginners, we established that AI learns by spotting patterns across enormous amounts of data.

In Blog 2: What Is Deep Learning?, we built out the kitchen brigade: the layered specialist architecture that processes complex information at scale.

NLP is the waitstaff that takes your order and translates it before it ever reaches the kitchen.

Without it, you would need to speak in precise, structured commands for any AI to understand you. With it, you can type "wht time does the thing start tmrw" and the system works out what you mean.

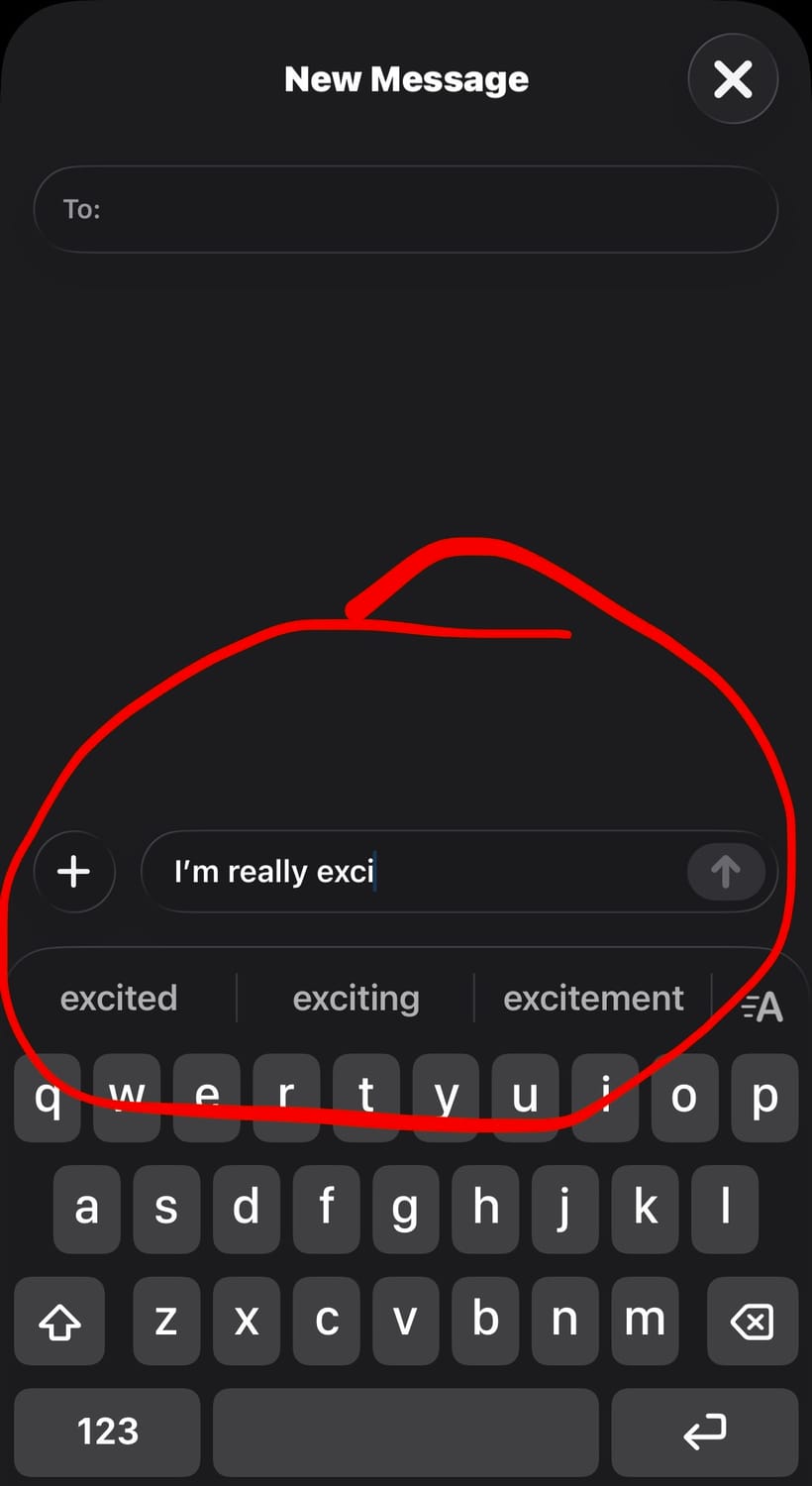

👉 Before We Go Further: How Can You See NLP In Action Right Now?

Open your phone's messaging app. Type "I'm really exci" and stop. Look at the autocomplete suggestions.

That prediction is NLP in action.

Your phone has processed billions of words and learned that "exci" almost always precedes "excited." It is making a probabilistic guess based on patterns, not reading your mind.

Keep that in mind as we go through how it actually works. Every autocomplete, every voice assistant response, every spam filter decision runs on the same underlying principles.

Type half a word into your phone and it finishes your sentence for you. That is NLP working in the background, predicting what comes next based on billions of words it has already seen.

💬 What Is Natural Language Processing (NLP)?

NLP is the set of techniques that allow machines to read, interpret, and generate human language.

That covers a wide range of tasks: understanding what a sentence means, identifying the emotion behind it, translating it into another language, generating a relevant response, and deciding whether that response should be a one‑word answer or a detailed explanation.

Human language is not structured data. It is ambiguous, inconsistent, full of implied meaning, and constantly changing.

"I could eat" means you are hungry. "That film was something else" could mean brilliant or terrible depending on tone. "Fine" means at least four completely different things depending on who says it and how.

Standard software cannot handle this. It needs explicit rules.

NLP, built on top of the deep learning architecture covered in Blog 2: What Is Deep Learning?, learns to handle ambiguity the same way it learns everything else: from an enormous volume of examples.

🧠 Quick fact : Over 70% of online customer service chats now use NLP-powered bots at some stage often without people even realising

⚙️ How Does NLP Actually Work (In Simple Terms)?

You do not need the technical details, but you do need three core ideas, and the right analogies make them stick.

What are Tokens (the Lego bricks)?

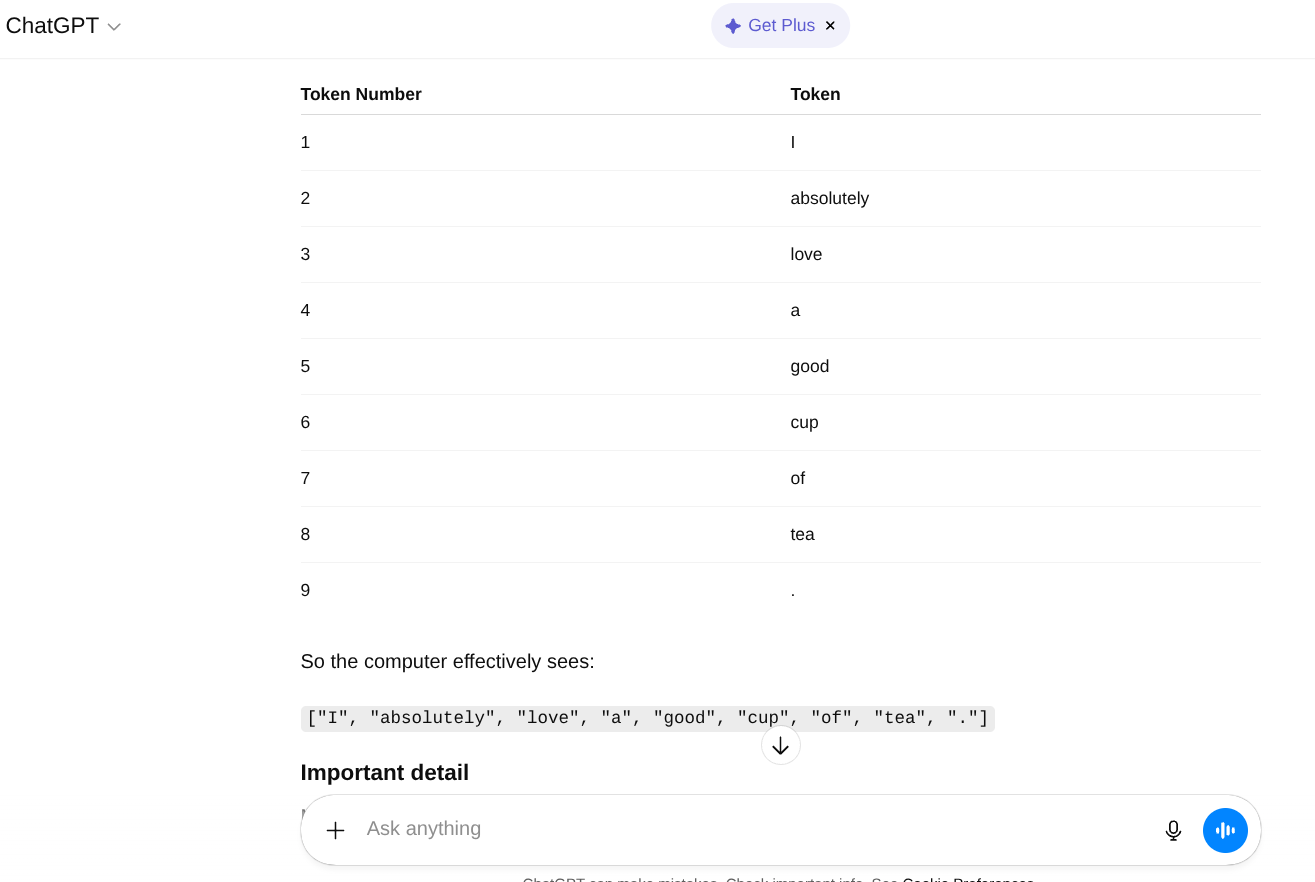

Tokens are the small pieces your text is broken into so an AI model can process it.

Before any AI model can process your words, it has to break them into smaller pieces, and these pieces are called tokens.

AI does not see words the way you do. It sees Lego bricks. Your sentence gets snapped apart into individual pieces before anything else happens.

"I love pasta" becomes roughly: [I], [love], [past], [a]. Not quite words. Not quite letters. Something in between.

Why does this matter?

Because it lets the system handle words it has never seen before. "Unbelievableness" can be broken into familiar pieces and processed even if that exact word never appeared in training data. The bricks are reusable even when the structure is new.

This also explains some of the stranger autocorrect failures. The system is working with fragments, not whole words, and sometimes reassembles them in unexpected ways.

If you want to see a more technical breakdown of exactly how tokenisation works, Hugging Face has a clear explanation at huggingface.co that is written for non-engineers and worth five minutes if this concept clicked for you.

This is what AI actually sees when you type a sentence. Not words, but fragments. Each piece gets processed individually before the model works out what the whole thing means.

What Are Embeddings (The Giant Map)?

Embeddings are the way an AI turns tokens into points on a giant “meaning map” where similar words sit close together.

Once the text is broken into tokens, each one gets placed on a map.

Not a literal map. A mathematical one. But the geography metaphor is useful: words that tend to appear in similar contexts end up physically close to each other on this map.

“Coffee” sits near “Morning,” “Caffeine,” “Tired,” and “Mug.” “Bank” sits near “River” in one part of the map and near “Loan,” “Interest,” and “Account” in a completely different part.

This is how the system handles meaning. It does not look up definitions. It uses location. Where a word sits on the map, and what surrounds it, tells the model what the word tends to mean.

This is also how the system handles context.

When you use "bank" in a sentence, the surrounding words act as a postcode. "River bank" locates it in one neighbourhood. "Bank account" locates it in another.

The model reads the postcode and goes to the right part of the map.

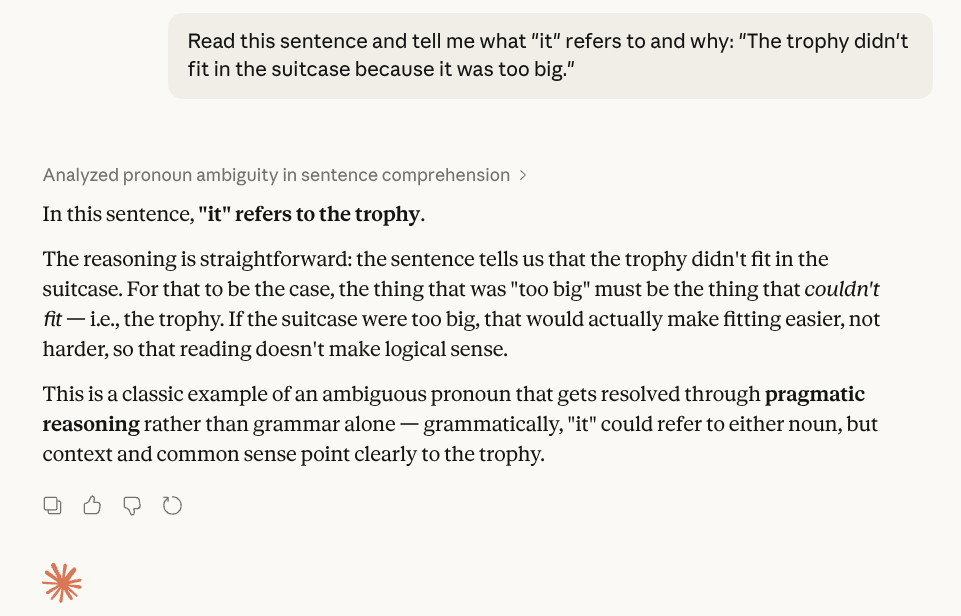

What Is Attention (The Spotlight)?

Attention is the mechanism that lets a language model focus on the most relevant words in a sentence instead of treating every word as equally important.

Long sentences create a problem. By the time the system reaches the end, what do the words at the beginning mean in context?

The attention mechanism is a spotlight.

Rather than trying to hold the entire sentence in equal focus, the model learns to point its spotlight at the most relevant words when making each decision.

In the sentence "The trophy didn't fit in the suitcase because it was too big," the spotlight lands on "trophy" and "suitcase" when it needs to resolve what "it" refers to.

It figures out that "too big" applies to the trophy, not the suitcase, by attending to the right words at the right moment.

This is the same mechanism that allows transformers, the architecture behind GPT-5.2 and Claude 4.6, to handle documents, long emails, and multi-turn conversations without losing the thread.

The spotlight moves.

The context stays coherent.

One ambiguous sentence, the right answer, and a clear explanation of why. This is the attention mechanism doing its job, focusing the spotlight on the words that actually matter to resolve the meaning.

💥 What Is Different About NLP In 2026?

NLP has changed significantly in the past two years. The tools you are using in March 2026 are not the same as the tools from 2023, and three specific upgrades are worth knowing about.

What is Adaptive Thinking?

Adaptive Thinking is a feature where the model chooses how hard to think before responding, using more reasoning for tricky inputs and less for simple ones.

Claude 4.6 now uses what Anthropic calls Adaptive Thinking.

In plain terms, the model can choose how hard to think before responding. A message like “Hello” gets an instant reply because the model does not need to reason.

But a sarcastic sentence like “Oh great, another Monday” requires a moment of processing to identify that “great” is not being used sincerely.

The system allocates more computational effort to genuinely ambiguous or complex inputs and less to simple ones.

This is the waitstaff equivalent of a seasoned server who spots immediately that your "I'm fine" is a polite fiction, while also not overthinking it when you just want the bill.

What is Context Compaction?

Context compaction is how modern language models compress earlier parts of a conversation into summaries so they can remember the meaning without keeping every word.

Earlier language models had a memory problem.

In a long conversation, they would effectively “forget” what was said at the beginning by the time they reached the end. The context window, the amount of text a model could hold in view at once, had a hard limit.

2026 models handle this with context compaction. Rather than dropping old content when the window fills up, the model compresses earlier parts of the conversation into summaries and retains those.

The details are condensed, but the meaning is preserved.

Think of it as the waitstaff keeping a tidy notepad. They do not memorise every word of every previous conversation.

But they keep a running summary: "Table 4 has been here before, prefers the fish, asked about allergies." The important context stays accessible even when the verbatim detail is gone.

Research from the Oxford NLP Group on long-context language modelling, published at nlp.cs.ox.ac.uk, traces how this problem was identified and the approaches researchers developed to address it.

One practical note here: if you are feeding sensitive client information into an AI tool during a long conversation, context compaction means the model is building and retaining a compressed version of everything you have shared.

The waitstaff's notepad does not disappear between sessions in all tools. Before you start pasting contracts, client details, or confidential business data into any AI window, our AI security guide: what's safe to share covers exactly what is fine, what is risky, and what to avoid.

📱 Where Are You Already Using NLP?

Once you start noticing NLP in action, you'll see it everywhere:

How Do Voice Assistants Use NLP?

Voice assistants like Siri, Alexa, and Google Assistant use NLP to turn your spoken questions into text, understand what you meant, and keep track of short conversations over multiple turns.

Siri, Alexa, Google Assistant. Always ready to misunderstand you in creative new ways. The genuine improvement over the past three years is context.

Earlier versions treated each query in isolation.

Current versions maintain conversational context across a short exchange, which is why you can now say “What’s the weather tomorrow?” and follow it with “What about the day after?” without the assistant losing the thread

How Do Autocorrect And Predictive Text Use NLP?

Autocorrect and predictive text use NLP models trained on huge amounts of writing (plus your own messages) to guess what you meant to type and which words you are likely to use next.

The system that insists you meant “ducking” has processed enough clean, family‑friendly text that a specific four‑letter word appears far less frequently in its training data than its polite substitute. The pattern wins. Your intention loses.

The more interesting part: predictive text on modern phones now uses a local, on‑device NLP model that has learned from your specific writing patterns over time.

It knows you always text your mum on Sunday evenings and that you typically use complete sentences with her. It adjusts accordingly.

How Do Spam Filters Use NLP?

Spam filters use NLP to analyse the wording, tone, and structure of emails so they can spot suspicious messages even if they have never seen that exact text before.

Your inbox’s bouncer.

Deep learning spam filters now use NLP to understand the intent and tone of an email, not just flag known trigger phrases.

A message that has never been seen before can still be correctly identified as spam because its sentence structure, urgency framing, and request pattern match what spam tends to look like.

🧠 Quick fact : NLP isn't just about text, it also powers speech-to-text apps like Otter.ai or Zoom transcripts turning spoken words into searchable text in real time

How Does Sentiment Analysis Use NLP?

Sentiment analysis uses NLP to scan large volumes of text and work out whether the overall tone is positive, negative, neutral, or mixed.

Businesses use this to process customer reviews, social media mentions, and support tickets at scale.

Instead of someone reading every review manually, NLP identifies whether the tone is positive, negative, neutral, or mixed, and flags the ones that need human attention.

The practical limit: sarcasm still causes significant problems. "Oh, the delivery took three weeks. Brilliant service." registers as positive in unsophisticated systems because "brilliant" is a positive word.

Adaptive Thinking models handle this better, but it is not fully solved.

Paste in your reviews, ask for a sentiment breakdown, and you have a clear picture of what your customers actually think in seconds. No dashboard required.

❓Why Does NLP Still Get Things Wrong?

NLP is genuinely impressive, but it’s also fallible in predictable ways that follow recognisable patterns.

Most of the mistakes that catch people out follow a specific pattern, and our 5 common AI mistakes that beginners make (and how to fix them fast) covers the ones that come up most often when people start using these tools in their business.

Why does NLP struggle with sarcasm?

NLP often misses sarcasm, irony, and implied meanings because human language relies heavily on what’s not said, requiring cultural context that models can only approximate.

Irony, understatement, implied criticism, and rhetorical questions all require cultural context and real-world understanding that NLP models approximate rather than truly possess.

Adaptive Thinking helps. It does not fully solve it.

When the stakes matter, a human still needs to review tone-sensitive communications before they go out.

Why does NLP struggle with cultural variations?

NLP struggles with cultural and regional differences because language isn’t uniform, meanings shift even within the UK, let alone across cultures.

"Quite good" means different things in different parts of the UK alone, let alone across languages and cultures. NLP systems trained primarily on one variety of text will misread another.

This is worth knowing if you are using AI tools for customer communications aimed at an international audience. Test the outputs with people who know the target culture. Do not assume the system does.

Why does NLP reflect human biases?

NLP models learn from human-written text, absorbing biases embedded in language, so common framings in training data become default outputs.

NLP models learn from text written by humans, which means they absorb human biases, including the ones baked into language itself.

The framing that appears most often in the training data becomes the default framing in the outputs.

This connects directly to the patterns problem covered in Blog 1: Machine Learning for Beginners.

The training data is the foundation. If the foundation has gaps or skews, the model's language reflects them. Human oversight on anything that carries real consequences is not optional.

🚀 What Does NLP Mean For Your Business?

NLP delivers practical benefits for businesses today without needing a technical team, as it’s already built into everyday tools you’re using or considering.

How does NLP improve customer service?

NLP powers chatbots, automated email responses, and smart routing to handle customer service queries efficiently around the clock.

Chat-bots handling common queries at any hour. Automated email responses for routine questions. Smart routing that sends urgent issues to a human and handles the rest automatically.

The 2026 caveat: automated responses now need to be labelled as such in many jurisdictions. Build that disclosure into your setup from the start rather than retrofitting it later.

How does NLP improve content creation?

NLP streamlines content and communication by generating drafts for emails, social posts, and product descriptions while analysing customer feedback at scale.

Sentiment analysis on customer feedback at scale. Subject line testing before you commit to a campaign.

The pattern that works: use NLP tools to produce the first draft and handle volume. Use your own judgement for tone, accuracy, and anything that carries your name.

How does NLP uncover customer needs?

NLP tools process vast amounts of customer reviews quickly to reveal hidden themes and patterns you’d miss manually.

Not to replace customer conversations. To make sure you are not missing signals that are hiding in the volume.

⚖️ What Are The Key NLP Takeaways?

NLP is the waitstaff between you and the deep learning kitchen. It translates your messy human input into something the model can process.

Tokens are Lego bricks. Words get broken into fragments that can be recombined for any input, including words the system has never seen before.

Embeddings are a giant map. Meaning is location. Words that appear in similar contexts sit close together, which is how the system handles ambiguity and context.

Attention is a spotlight. The model focuses on the most relevant words to resolve context, not the whole sentence equally.

Adaptive Thinking means 2026 models choose how hard to think. Simple inputs get quick responses. Ambiguous ones get more processing time.

Context compaction means long conversations no longer fall apart. Old context is compressed, not dropped. That compressed context still exists, which is why data hygiene matters.

The August 2026 labelling requirement is real. AI-generated customer communications need to be identifiable as such under the EU AI Act.

Sarcasm, cultural nuance, and implied meaning are still genuine limitations. Human review matters for anything tone-sensitive.

🔮 Next in This Series

How to Use AI: 4 Simple Ways Beginners Can Start Today

You now know how AI learns patterns, processes them in layers, and turns them into language.

The only question left is: what do you actually do with that on a Monday morning?

This final post in the series gives you four concrete starting points you can try today, no technical background needed

📚 Not ready to move on yet? If you want to see exactly which tools are worth starting with and how to get reliable results from them, the Free Beginner's AI Cheat Sheet breaks it down without the jargon.

No Jargon AI

Making AI make sense - one prompt at a time

Declaration

Some links on this site are affiliate links.

I may earn a small commission, but it doesn't

cost you anything extra.

I only recommend tools i trust

Thank you for your support

Socials

Location

Based in Mansfield, Nottinghamshire

Simplifying AI for beginners, no matter

where you're starting from.

All Rights Reserved.